The Code You Didn't Authorize

Shadow tools, agentic risk, and the governance gap in AI-assisted development

It's a Wednesday morning. The CISO is reviewing a vendor security questionnaire when a Slack message from an engineer catches her eye.

"Hey—quick question. If we're using Copilot in our IDE, does that count as a third-party data processor?"

She pauses. "We don't use Copilot."

"We do. Most of the backend team has been on it since August."

She pulls the license records. Nothing. No procurement. No security review. No data handling assessment. Engineers had signed up individually, connected their repos, and started pasting code—including internal API logic, database schemas, and in at least one case, environment variables with production credentials.

Nobody asked permission. Nobody needed to. The tool just worked.

Now it's a security incident, a procurement gap, a policy gap, and a board question—all at once.

The Pattern

AI coding assistants are the fastest-adopted category of enterprise AI. Not because leadership chose them. Because developers did.

GitHub Copilot alone now has over 20 million users and is deployed across 90% of Fortune 100 companies. According to a 2025 Jellyfish report, 90% of engineering teams now use AI in their workflows—up from 61% a year earlier. The 2025 Stack Overflow developer survey found 82% of developers using ChatGPT and 68% using Copilot, with most combining multiple tools. And here's the governance detail that matters: 94% of companies surveyed by OpsLevel have teams actively using AI coding assistants, but only a third report that more than half their developers are formally adopted. The rest are using these tools informally—individual accounts, no procurement, no security review.

By the time leadership notices, the tool is embedded in workflows, wired into repositories, and generating code that ships to production.

That's not an adoption success story. That's a governance gap with a head start.

I say this as someone who uses AI coding assistants every day. At AI Guru, they're part of how we build. I use them. Many on my development team use them. But not all—and that's fine. The ones who use them are faster at scaffolding, testing, and refactoring. The ones who don't have their own workflows that work.

What matters isn't whether people use the tools. It's whether the organization knows who's using them, what they're being used for, and what guardrails exist. We made those decisions deliberately. Most organizations haven't—because nobody asked.

Why This Is Different

Most AI tools your organization evaluates go through intake: security review, legal review, data classification, procurement. AI coding assistants skip the line for three reasons.

They live inside developer tools. IDE extensions don't trigger the same procurement signals as a SaaS platform. They look like productivity features, not enterprise software.

They touch everything. Unlike a chatbot scoped to one workflow, a coding assistant sees your codebase, your architecture, your logic, your secrets. The attack surface isn't a conversation—it's your intellectual property.

They're becoming agentic. The newest generation doesn't just suggest code. It opens pull requests, modifies files across repositories, runs commands, and interacts with internal systems. Once a tool can act—not just recommend—the risk profile changes fundamentally.

This means a tool that started as autocomplete now has potential access to proprietary algorithms, customer data patterns, authentication logic, and deployment pipelines. And in many organizations, no one outside the engineering team knows it's there.

The Governance Questions

When this surfaces—and it will—leadership will nee

d answers to questions most organizations haven't asked yet.

What has been exposed? Not just "what tool are they using," but what code, data, and logic has been sent to external models. Were prompts retained? Used for training? Is there a contractual basis for deletion?

Who authorized this? If the answer is "nobody," you have a shadow IT problem with IP implications. If the answer is "engineering leadership," you need to know what security review occurred and what policies govern usage.

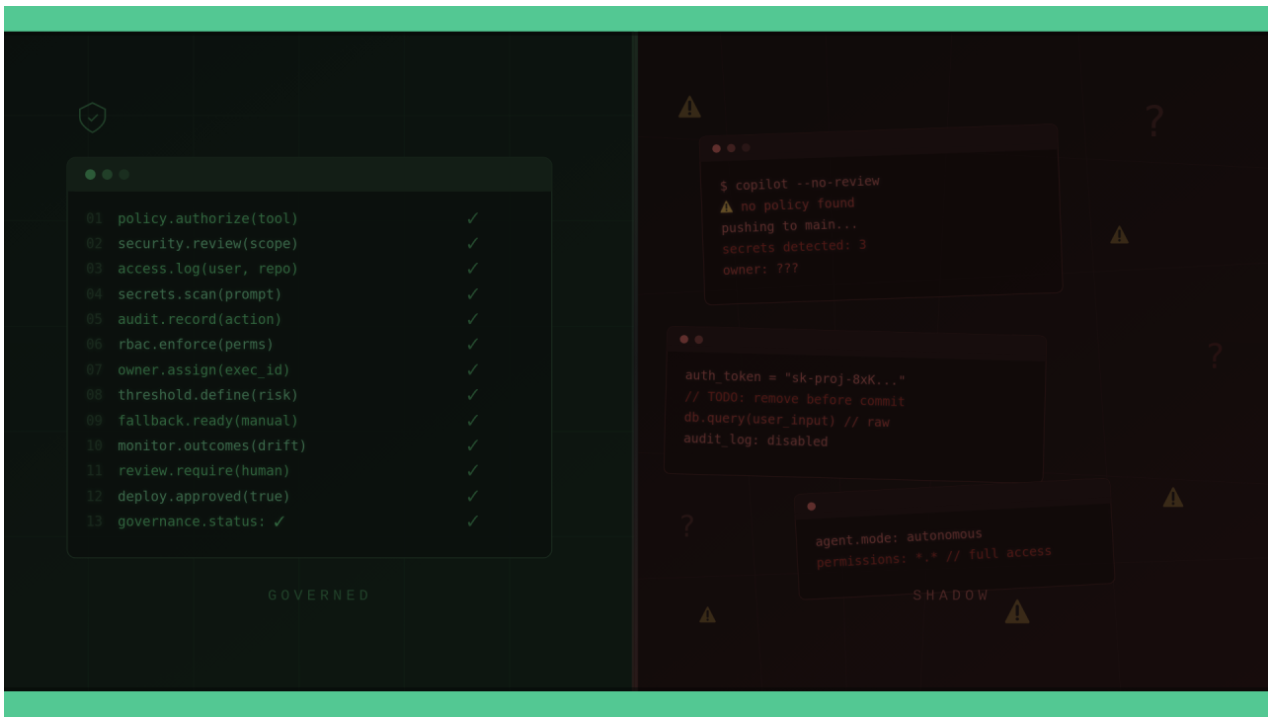

What can the tool do? There's a material difference between a tool that suggests code in an editor and one that can open pull requests, modify tickets, fetch internal documentation, or execute commands. If the tool can act, you need least-privilege permissions, scoped access tokens, and audit trails. Most organizations haven't classified this distinction.

Where are the restricted zones? Not all code carries the same risk. Authentication flows, encryption, payment processing, and anything under regulatory audit should have heightened scrutiny—or be off-limits to AI generation entirely. The question is whether those boundaries exist and are enforced.

What's the fallback? If the tool is compromised, sunsets its enterprise tier, or changes its data practices—what happens? If your developers can't work without it, you have a dependency without a contingency.

What You Need Ready

The companies handling this well aren't banning AI coding tools. They're governing them the way they govern any critical developer infrastructure.

A sanctioned toolset with a security baseline. One approved primary tool, a short list of approved alternatives for niche needs, and a periodic re-approval cycle. Every approved tool gets a security review covering data handling, retention, training policies, admin controls, and incident response.

A federated governance model. A central team sets baseline policy, the approved vendor list, security controls, and metrics. Individual teams choose how to integrate into their workflows. Centralized-only models kill adoption. Team-led-only models create risk sprawl.

Clear usage policies with concrete examples. Not a generic acceptable use policy. Specific guidance: what not to paste (secrets, PII, proprietary algorithms), what's safe to generate (tests, glue code, internal tooling), and what requires human-only review (security-critical flows, regulated logic). Developers need examples, not abstractions.

Agentic capabilities treated as a separate risk tier. If a tool can open PRs, change tickets, fetch from internal wikis, or run commands, it needs scoped permissions, approval workflows for critical actions, and comprehensive audit logging. "Suggest" and "act" are different governance categories.

Continuous monitoring, not a one-time review. Usage rates by team and repository. Quality signals: review comments, reverts, incident correlations. Quarterly check-ins on vendor posture, model changes, and policy drift. The vendor ships new capabilities constantly—your approved state can go stale fast.

The Real Test

Ask your engineering leadership three questions this week:

How many AI coding tools are active in our environment right now—sanctioned and unsanctioned?

What code and data have been sent to external models in the last 90 days?

If we needed to revoke access to all AI coding tools by end of day, could we?

If the first answer is "we're not sure," you have a visibility problem. If the second answer is "we don't know," you have a data exposure problem. If the third answer is "not easily," you have a dependency problem.

Any one of those is manageable. All three together is a governance incident waiting for a trigger.

The Bottom Line

AI coding assistants aren't a future board issue. They're a current one—most boards just don't know it yet.

The tools are already inside the building. They're already touching your IP. And the newest ones are starting to take actions, not just make suggestions.

The question isn't whether to allow them. Developers have already answered that. The question is whether governance has caught up to what's already happening.

If your organization can deploy AI into its most sensitive environment—the codebase—without triggering a single control, the gap isn't in the technology.

It's in the oversight.

P.S. Ask your CISO or VP of Engineering one question this week: "How many AI coding tools are developers using right now that haven't been through security review?" If the answer takes more than a minute, that's your signal. The tools are already in. The governance should be too.